.Cut down on your Lambda cost

With serverless coming into focus more and more, services like the AWS Lambda provide a simple to understand, good approach towards the next step in cloud computing. But "serverless" does not mean "no servers". You as a developer just don't have to think about managing servers. They are still there but their role has changed.

Here's what to consider in terms of cost optimization

Price calculation

There are 3 factors that determine the cost of your Lambda function:

Strategies

1. Optimizing Function Frequency

There are various factors that affect the invocation frequency of Lambda function. This is based on the triggers. Closely monitor your triggers and see if you can do to reduce the number of invocations over the time.

For example, suppose your Lambda function is being triggered by the Kinesis Stream. Since the batch size is quite small, the invocation frequency is pretty high. In such cases, what you can do is opt for higher batch size so that your Lambda function is invoked less frequently.

2. Writing Efficient Code

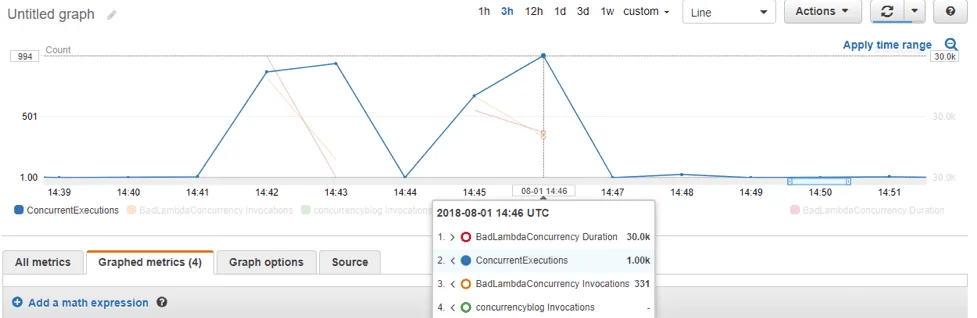

The function that executes in half the time is a function that will cost you half the money. One must note that the execution duration is directly proportional to the amount of money you’ll be charged. It is critical to keep an eye on the duration metric inside CloudWatch. And it’d be common sense to modify and iterate your function if it is taking a suspiciously long time to execute.

For this purpose, AWS X-Ray can help you monitor your function from end to end.

One of the most overlooked aspects is that AWS Lambda cold starts happens for each concurrent execution. Therefore, as a first step in optimizing your costs, make sure that the functions are executed with the best frequency to avoid cold starts as much as possible. An approach that works most of the times is to group them in bigger batches.

3. Monitoring Data Transfer

While talking about Lambda charges, it often gets out of our sight that you’ll also be charged for the data transfer at standard EC2 data transfer rates. Hence, it is the default to keep an eye on the amount of data you’re transferring out to the internet and other regions of AWS.

While talking about internal data transfer, there isn’t much you can do about it as you can’t control the amount of data your Lambda will transfer. Likewise, there is no metric inside CloudWatch which can help you in monitoring the data transfer rate.

In such cases, here’s what you can do:

- Keenly monitor your AWS Cost & Usage Report. Filter it by Resource (your Lambda function) and find different values in the Transfer Type column. Further, get the usage amount. This might come off as slightly time-consuming.

- Log the size of data transfer operations in your Lambda code and then configure a CloudWatch Metric Filter as a reference that becomes a CloudWatch Metric.

4. Right Sizing

When provisioning memory size for your Lambda, AWS will allocate CPU proportionally (e.g. a 256 MB function will receive twice the processing power of a 128 MB function). Depending on your workload this may give you a decent performance boost at a slightly higher price per invocation, executing your function faster and cutting cost for billed time. Therefore it's a good incentive to check if this strategy is more cost efficient in the long run.

When setting memory for a Lambda, the execution may halt immediately if it runs out of memory. Changes to the function over time may alter it’s memory usage so it's best to monitor this.

Tools like AWS Lambda Power Tuning may help you find this break-even point for cost and/or perfomance by testing the appropriate memory size.

We use cookies on our website. Some of them are essential,while others help us to improve our online offer.

You can find more information in our Privacy policy